Most advertisers treat Performance Max like a black box that either “works” or “doesn’t.” That’s a useful shorthand until it costs you margin. The real story in 2026 is neither hero nor villain. Google has made PMax far more transparent and capable, but the problems now are strategic and creative, not technical. In other words, the machine has improved and our habits haven’t caught up.

The core shift: transparency and smarter AI- not magic

Performance Max in 2026 is not the same opaque beast it was in 2021. Google has added channel-level reporting and deeper inventory visibility, and it has folded Gemini-grade models and creative tooling into the product. These changes mean you can finally see where conversions happen and get AI-assisted creative options, but that doesn’t mean you can stop thinking.

Why this matters is simple. When the platform was inscrutable, the natural reaction was to either hand everything over or abandon it. Now you can interrogate results and steer the algorithm. That is powerful, if you change how you plan, measure, and feed the system.

What’s actually new and why it matters

Channel and asset transparency

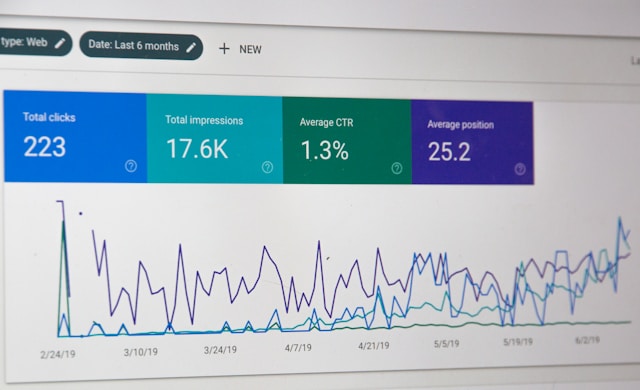

Google’s roster of updates added channel performance reporting and better asset reporting, so you can see which placements, creatives, and channels are driving value rather than guessing. That turns PMax from a “spray and pray” setup into a measurable motor. Use that visibility to align bidding, creative, and budget allocation with real outcomes rather than impressions.

Smarter generative creative (Gemini)

Generative models are now integrated to help produce longer headlines, richer copy, and on-demand imagery. That speeds creative iteration, but it introduces a new risk, that is, sameness. If everyone uses the same prompts and templates, your ads blend into a rising tide of polished mediocrity.

Better audience signals and asset controls

PMax now rewards thoughtful audience signals and the practice of splitting distinct audiences into purposeful asset groups. That lets you hint to the system rather than hand it a single blunt signal. Treat signals as guiding hypotheses, not hard targeting.

What’s not working and the painful truth

Creative fatigue is the real bottleneck

With automation optimizing delivery, performance decays when creative becomes predictable. The platform can optimize placement and bid, but it cannot conjure attention. Advertisers are seeing CTRs and conversion rates slide because creatives age, often quietly, while teams assume the algorithm will compensate. Refreshing creative is necessary, but so is deliberate variation. Different hooks, formats, and narrative frames should match different moments in the funnel.

Over-automation without strategy

PMax gives the illusion of full control with less work. The trap is treating it like an autopilot. Turn it on, set a goal, and expect growth. The algorithms optimize what you feed them. Poor feeds, including bad assets, weak conversion signals, and muddled audience inputs, mean the machine optimizes toward mediocre outcomes. In 2026, automation amplifies your strategy. It does not replace it.

Measurement and incrementality still require discipline

Improved reporting reveals more, but it also exposes measurement complexity. Cross-device journeys, blended channel effects, and attribution quirks persist. PMax can drive conversions that would have happened elsewhere, and proving incremental value still needs experiments and holdouts. Do not assume last-click or platform-reported lifts equal true business impact. Run controlled tests where it matters.

Regulatory and competitive pressure

PMax’s consolidation of signals and inventory has drawn regulator attention in some regions. That means future product capabilities, especially around data portability and reporting, may shift in the coming quarters. Keep an eye on compliance and diversify channels so policy changes do not derail performance.

How to think about Performance Max in 2026, not a checklist

The single biggest mistake is asking, “How do I make PMax work?” instead of, “What does success look like when a large portion of spend is algorithmically allocated?” Change the question and your job changes.

- Stop optimizing line items and start defining intent. Build asset groups that represent distinct commercial intents such as discovery, consideration, and conversion, and feed them different value signals and creative languages.

- Treat AI as a creative co-pilot, not a source of shortcuts. Use generative models to expand ideas quickly, but curate and humanize what you run live.

- Make measurement an organizational discipline. Pair PMax experimentation with uplifts, geo-tests, or incrementality windows. Treat platform metrics as directional, not definitive.

Practical guardrails that actually move the needle

A few guardrails, pragmatic rather than dogmatic, help keep PMax honest.

- Use layered audience signals across asset groups so the algorithm learns differentiated paths to conversion. One mega-signal leads to muddled optimization.

- Rotate creative formats on a cadence tied to KPIs. Test hooks for awareness, offers for acquisition, and reassurance for retention. Rotation does not mean wholesale reinvention every week.

- Hold back a slice of budget for controlled experiments such as creative variants, landing page changes, and channel holdouts. If you cannot measure incrementality, you are steering blind.

- Monitor channel-level reporting weekly, not monthly. The faster you see shifts in where value comes from, the better you can allocate budget and pause wasteful placements.

The trade-offs, because nothing is free

Using PMax aggressively accelerates scale and reduces tactical overhead, but it comes with trade-offs. You give up granular control over placements, risk encroachment on search budgets, and increase dependence on the platform’s trust in your signals. The answer is not “don’t use it.” The answer is “use it with discipline.” Expect to give up some hand-holding in exchange for speed, but demand transparency and guardrails in return.

Conclusion: the new work is human

Performance Max 2026 is less about a new tool and more about a new contract between humans and machines. Google shipped smarter models and better reporting, and the platform did its part. What remains is human work: sharper strategy, more imaginative creative, and disciplined measurement. If you treat PMax as the end of craft, you will underperform. If you treat it as an amplifier for better thinking, it will scale what matters. The machine will not save a weak idea, but it will make a strong one sing louder.